That AI agent project your team was excited about three months ago? The one that was going to transform workflows and boost productivity? It’s sitting in limbo, gathering digital dust while everyone avoids the uncomfortable question: what went wrong?

You’re not alone. Research shows that 70% of AI initiatives lose momentum before delivering meaningful results. The good news? Most stalled projects can be revived—and the fixes are often simpler than you think.

What Does a Stalled AI Project Actually Look Like?

Stalled doesn’t always mean dead in the water. Sometimes it’s more subtle than that.

Maybe your AI agent is technically functional but no one uses it consistently. Or perhaps development dragged on for months without clear milestones. You might have a tool that works perfectly for the original use case, but adoption stagnated at 20% of your target users.

The telltale signs are consistent: dwindling meeting attendance, vague status updates, and that sinking feeling that you’re throwing good money after a project that’s lost its direction.

These projects don’t fail because the technology doesn’t work. They stall because the human elements—clarity, communication, and commitment—break down along the way. Understanding why AI implementations fail is the first step toward preventing it from happening to yours.

The Real Reasons AI Projects Lose Steam

Fuzzy Success Metrics

Here’s the uncomfortable truth: most AI projects start with enthusiasm but lack concrete, measurable outcomes. “Improve efficiency” or “enhance customer service” aren’t goals—they’re wishes.

When your team can’t clearly articulate what success looks like, momentum dies. People lose confidence because they can’t tell if they’re winning or losing.

Without specific targets, every small setback feels like failure. Every feature request becomes a priority. Every stakeholder has a different opinion about what the agent should do.

Users Weren’t Really Involved

Too many AI projects happen to people instead of with people. The IT team or leadership decides what would be helpful, builds it, and then expects enthusiastic adoption.

But people resist what they didn’t help create. If your end users weren’t meaningfully involved in defining the problem and shaping the solution, you’re fighting an uphill battle for adoption. This is exactly the kind of identity anxiety that derails even well-intentioned AI rollouts.

This shows up as feature requests that miss the mark, workflows that feel forced, and users who find workarounds instead of embracing the new tool.

Scope Creep Killed the Timeline

AI projects are particularly vulnerable to scope creep because the technology feels limitless. Someone suggests the agent could also handle scheduling. Then reporting. Then customer inquiries.

Before you know it, your focused solution became a Swiss Army knife that does everything poorly instead of one thing exceptionally well.

Each new requirement adds complexity, extends timelines, and dilutes the original value proposition. The project that was supposed to take 8 weeks enters month six with no clear end in sight. Sometimes the answer is to build custom, but even custom solutions need tight scope to succeed.

How to Diagnose Where Your Project Went Off Track

Before you can fix a stalled project, you need to understand what specifically broke down.

Start with a project autopsy. Gather your core team and ask three diagnostic questions:

Question 1: Can everyone clearly state the problem we’re solving? If you get different answers, you have an alignment issue. If the answers are vague (“make things more efficient”), you have a clarity problem.

Question 2: What would success look like to our actual users? This reveals whether you’ve been building for stakeholders or end users. The gap between these perspectives often explains adoption challenges.

Question 3: What changed since we started? Priorities shift, teams reorganize, budgets tighten. Sometimes projects stall simply because the organizational context evolved while the project stayed static. Budget surprises are a common culprit — the hidden costs of AI implementation often catch teams off guard midway through a project.

Be honest about what you discover. Acknowledging the real issues is the first step toward addressing them.

The Momentum Audit

Look at your project’s vital signs:

- When was the last productive team meeting?

- How many original stakeholders are still actively engaged?

- What percentage of planned features are actually being used?

- How often do users choose the old process over the new AI-powered one?

These metrics tell you whether you’re dealing with a technical problem, an adoption problem, or a strategic misalignment.

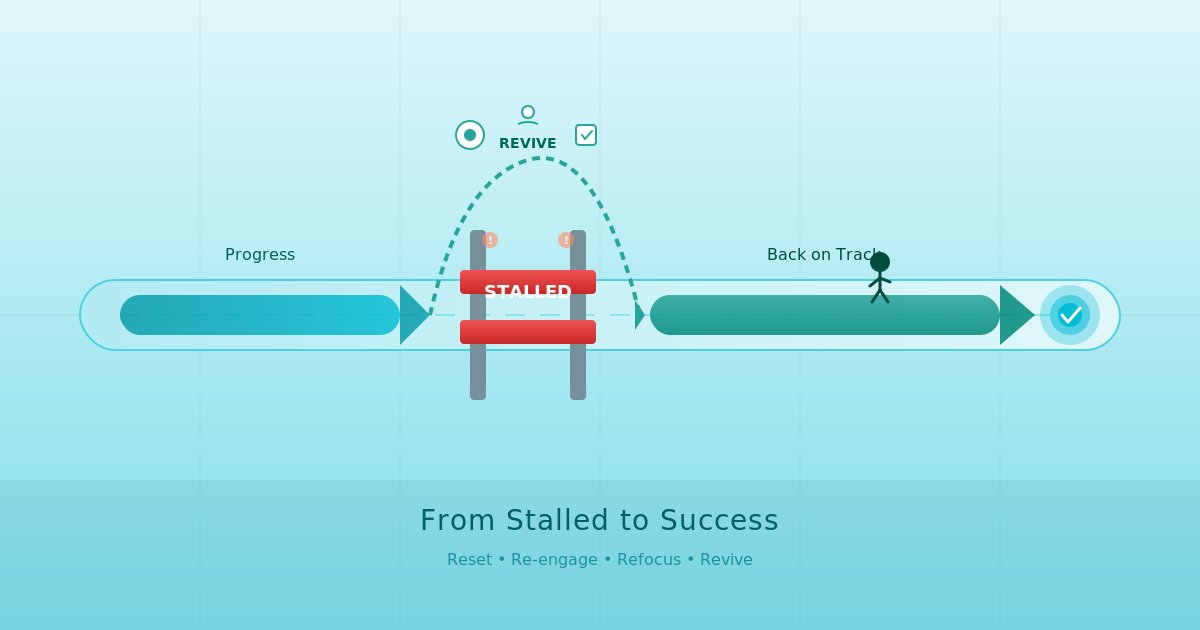

The Step-by-Step Revival Strategy

Step 1: Reset Expectations and Outcomes

Call a project reset meeting. Not a status update—a fundamental realignment.

Start by acknowledging that the current approach isn’t working. This isn’t about blame; it’s about creating space for honest conversation.

Then define success in concrete terms. Instead of “improve customer service,” try “reduce average response time from 4 hours to 30 minutes for routine inquiries.” Instead of “boost productivity,” specify “eliminate 2 hours of manual data entry per week for each team member.” Our guide on measuring ROI on your first AI agent walks through exactly how to set these measurable targets.

Write these outcomes where everyone can see them. They become your north star for every subsequent decision.

Step 2: Re-engage Your Actual Users

Your AI agent should augment human expertise, not replace human judgment — that’s the partnership, not replacement philosophy. But you can’t design for augmentation without deeply understanding the humans involved.

Schedule working sessions with your end users. Not requirements gathering meetings—actual collaborative design time where they help shape the solution.

Ask them to show you their current process, including the informal workarounds and shortcuts they’ve developed. These insights often reveal opportunities that formal documentation misses.

Let them test and iterate on prototypes. Their feedback should drive development priorities, not just influence them.

Step 3: Ruthlessly Prioritize Features

Take your expanded feature list and cut it in half. Then cut it in half again.

Every feature should directly support your concrete success metrics. If it doesn’t, it goes in a “future considerations” list that you won’t touch until the core functionality is working and adopted.

Focus creates momentum. A simple tool that solves a real problem generates enthusiasm. A complex tool that sort-of addresses multiple issues generates confusion.

Defend this prioritization fiercely. When someone suggests adding “just one more small feature,” remind them that you’re rebuilding momentum, not building a comprehensive platform.

Step 4: Create Quick Wins

Momentum builds on momentum. Identify small improvements that users will notice immediately.

Maybe that’s fixing the three most annoying bugs. Or streamlining the login process. Or adding a simple dashboard that shows time saved.

These wins restore confidence in the project and demonstrate that progress is happening. They also re-engage stakeholders who had started to check out.

Communicate these wins clearly. People need to see that the project is alive and improving.

Building Sustainable Momentum Going Forward

Reviving a stalled project is only half the challenge. You also need to prevent it from stalling again.

Establish regular user feedback loops. Not quarterly reviews—weekly or bi-weekly check-ins where users can share what’s working and what isn’t. A people-first AI strategy bakes this kind of ongoing engagement into the fabric of your implementation.

Make iteration part of your culture. Your AI agent should evolve based on real usage patterns, not just original specifications.

Celebrate adoption milestones, not just technical milestones. When usage hits certain thresholds, when users report specific time savings, when the AI agent becomes part of someone’s daily routine—these are the wins that matter.

Keep the human partnership central. Your AI agent succeeds when it amplifies human capabilities, not when it impresses technologists. If you’re rebuilding momentum from scratch, our 90-day AI adoption timeline provides a structured framework for phased re-deployment.

Most stalled AI projects aren’t failing because of the technology. They’re stalling because of communication breakdowns, unclear expectations, and insufficient user involvement.

The good news? These are fixable problems. With clear outcomes, genuine user engagement, and focused execution, you can transform a stalled project into a success story. And don’t underestimate the role of effective change management in keeping that momentum alive once you’ve found it again.

Your AI agent can become the productivity partner your team actually wants to use—not just another tool they’re supposed to use.

This post is part of our complete guide to AI Agents for Business — covering what agents are, why implementations fail, and how to get started.